A recent Which? investigation revealed that our personal contact details are freely collected and sold and can be use to defraud people . Smart meters, phones and social media give deep insight into our lives but it is not clear who owns the data or if it is being sold or shared. As we introduce smart technology to assist people in their homes we need safeguards to protect the vulnerable from the unintended consequences of capturing and sharing information.

This week I had a call from ‘Liam’ who confirmed my name, told me my address (which made him sound like he had some connection with me) and then subtly began fishing for information about my finances.

“Just a quick call Jonathan, my database is showing me that you’ve got some outstanding finance with Lloyds Banking Group, this could be a loan, credit card, mortgage, is that right?”

I told him he’d need to be specific, so he waffled a bit about how he wanted to check he had the right information on his database and asked me to confirm my date of birth so he could give me more detail. I asked him to send a letter as he had my address, it never came, but he did try the same ruse few days later.

When I asked another recent cold caller how they got my name and number, I was told I must have signed up for something and agreed to share my details, which her company had then bought (I’ve signed up with the Telephone Preference Service so they should not have called me anyway). The trade in personal information happens at a huge scale. Use of name address and telephone number information bought and sold legitimately can lead to irritation, but some people use that information maliciously resulting in fraud.

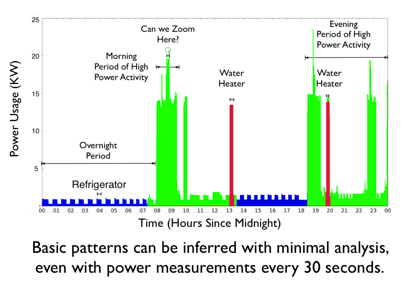

Technology gives organisations an intimate profile of our lives, for example smart electricity monitoring can show remarkable insight into activity levels – even the make of appliance used or how frequently vacuum cleaning is done – intimate detail of our lives and well-being.

That insight could be used for good or ill (when is the house empty) but there isn’t yet a visible focus on the safeguards needed to prevent harm resulting from unintended consequences of sharing information. With physical good manufacturers have an ongoing responsibility for the safety of their products which occasionally results in recalls, such as the recent one for tumble dryers and must always lead to careful design for safety over the lifetime of the product.

That insight could be used for good or ill (when is the house empty) but there isn’t yet a visible focus on the safeguards needed to prevent harm resulting from unintended consequences of sharing information. With physical good manufacturers have an ongoing responsibility for the safety of their products which occasionally results in recalls, such as the recent one for tumble dryers and must always lead to careful design for safety over the lifetime of the product.

Similar safety standards are required for all who collect and process information, after all once shared the data can never be deleted. The information commissioner in the UK should look after this but it hasn’t yet led to a data safety culture of the type we are used to in the physical world, even politicians are tempted to play fast and loose with our information.

Machine Learning and AI offer so much promise to use data collected from our homes to identify incidents in the home and early warning signs of decline which individuals and carers will find beneficial. Tech companies and service providers need to be absolutely clear about how the data they collect is used, shared and disposed of. This might sound alarmist, but we know how simple information can be used to dupe people and we can see that tech companies respond only reactively to people’s concerns about their services eg over fake news or cyber-bullying.

I think a clear approach to looking after people’s data can be used for competitive advantage eg

“XYZ service strongly protects information about activity in your home, it is only shared with carers with your explicit consent and never sold it is permanently deleted when you leave the service.”

This approach will both earn the trust of people cautious about “Big Brother” technology and avoid a future scandal about the misselling of information.

This is particularly important for services used by the elderly and vulnerable where information could be combined to build a map of where vulnerable people are living alone when their carers habitually visit and how well off they are. The safeguards are needed not because pioneers of machine learning intend bad things, but to ensure that they consider the unintended consequences of their work and if necessary put things right, as tumble dryer manufacturers have to do.